Use a Layered Model, Not a Buzzword

Britannica defines artificial intelligence broadly as a computer or robot performing tasks associated with human intellectual processes such as reasoning, learning, perception, problem solving, and language. It also separates AI from machine learning: machine learning is one method used to achieve AI, not a synonym for the whole field. That distinction matters in enterprise architecture because the label AI is too broad to guide design, controls, or procurement.

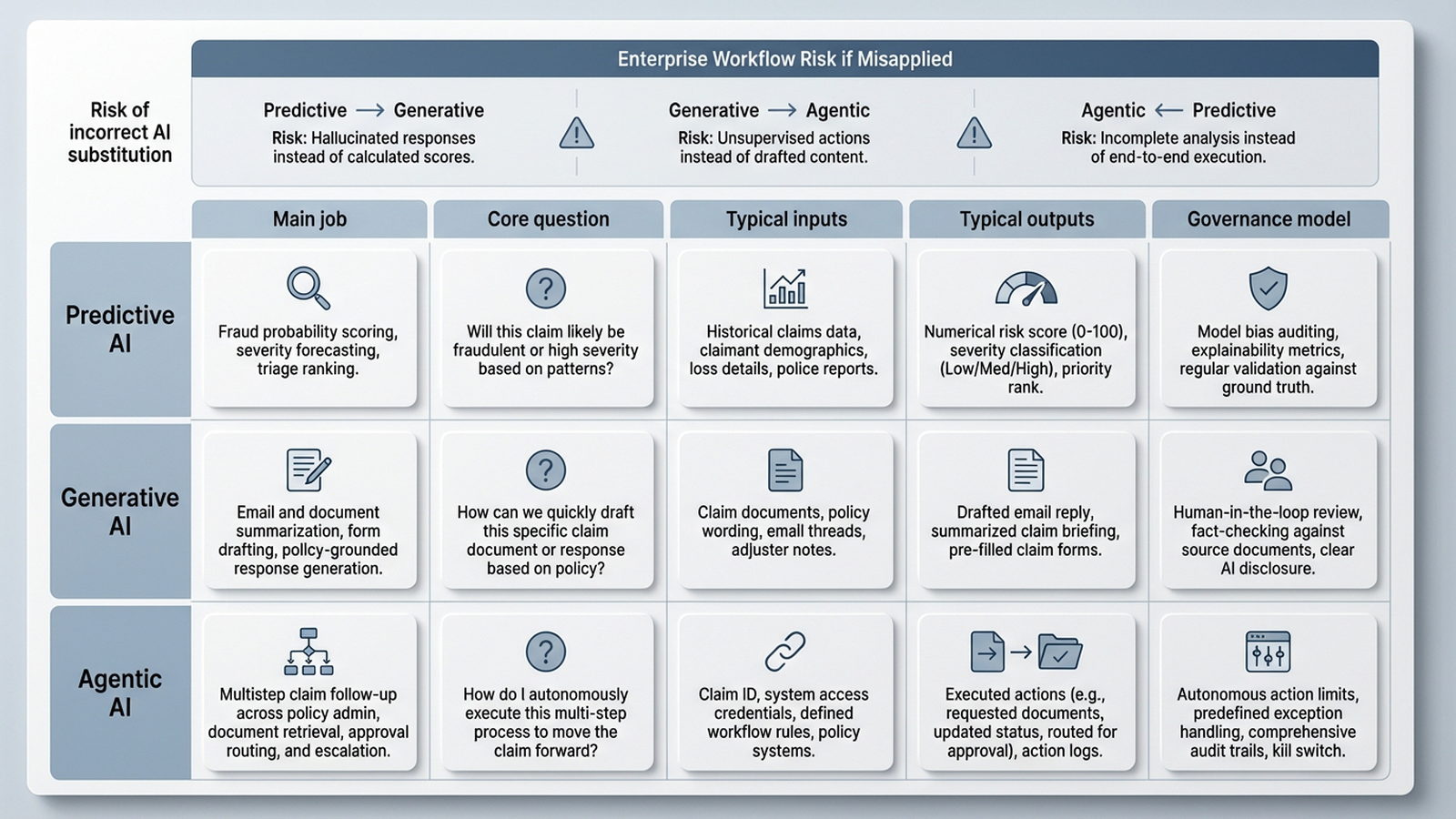

Oracle adds a more operational frame. It describes AI automation as the space between traditional RPA, which follows predefined rules and workflows, and newer AI agents that can plan, decide, and act across business systems. That creates a practical enterprise taxonomy. Predictive AI answers what is likely to happen. Generative AI answers what this content means or what draft should be produced. Agentic AI answers what sequence of actions should happen next.

In claims processing, that layered model prevents category mistakes. A predictive model might estimate claim complexity or likely escalation. A generative model might summarize an adjuster note or draft a claimant communication. An agent might gather documents, retrieve policy context, update systems, and request approval for the next step. If those layers are collapsed into one procurement story, teams often over-automate judgment and under-design controls.

The most useful way to explain AI to enterprise leaders is by operating layer, not by hype category.

Predictive AI Is for Scoring, Forecasting, and Prioritizing

Oracle's explanation of AI automation places machine learning in a familiar enterprise role: models learn from historical data to make predictions about future events and help determine the next step in an automated process. Britannica's distinction is useful here as well, because it reminds leaders that machine learning is a method inside AI, not the whole answer. Predictive systems are strongest when the task is estimating probability, ranking cases, or forecasting demand from prior patterns.

Applied to claims, predictive AI belongs at the triage layer. Based on Oracle's description of ML-driven prediction, a claims team can use predictive models to estimate likely handling complexity, flag cases for fraud review, or forecast intake volumes for staffing. The output is not a final narrative or autonomous action. It is a score, ranking, classification, or forecast that informs downstream workflow.

The trade-off is that predictive systems are only as reliable as their training data, thresholds, and monitoring. When policy language changes, claim mix shifts, or upstream data quality drops, scores can drift while looking superficially precise. Recommendation: keep repeatable and auditable decisions deterministic whenever possible, and use predictive models to prioritize work rather than silently make high-impact coverage or settlement decisions.

Predictive AI should shape priorities and probabilities, not masquerade as final judgment.

Generative AI Is for Language, Documents, and Structured Drafts

Oracle describes natural language processing and large language models as the capabilities that let AI automation move beyond fixed rules. In that account, models can read larger text blocks, process unstructured content such as handwriting, extract meaning and intent, summarize information, populate forms, and generate natural-language responses. Oracle also notes that when these systems are paired with retrieval-augmented generation, they can answer questions with accuracy grounded in enterprise data. Britannica's inclusion of large language models and NLP as core AI technologies supports the same boundary: this layer is fundamentally about language and content.

For a claims operation, this is the right tool for intake packets, adjuster notes, claimant emails, repair estimates, and call transcripts. A generative model can summarize a long file, extract the incident timeline into structured fields, draft a status update, or produce a first-pass explanation for a handler to review. Used well, it reduces clerical friction around documents rather than replacing claims policy logic.

The failure mode is obvious and important: generative systems can produce fluent text that sounds authoritative even when the grounding is weak. That is why grounded retrieval, prompt templates, approved source repositories, and human review matter. Recommendation: let generative AI draft, summarize, and normalize language-heavy work, but keep commitments, approvals, and policy interpretation inside governed business rules and accountable human roles.

Generative AI is most valuable when it reduces language friction without becoming the system of record for judgment.

Agents Add Planning, Tool Use, and State, Which Changes the Risk Profile

Oracle describes AI agents as the next step beyond AI automation: systems that orchestrate multiple AI models and simpler machine learning processes to analyze, plan, and complete tasks autonomously across business systems. IBM's discussion of agentic engineering adds the discipline required to use that power safely. In IBM's framing, agentic workflows still need a human in the loop, clear governance frameworks, RAG-based grounding in real documentation, review loops, testing expectations, and guardrail configurations.

That is why agents are not just better chatbots. In a claims workflow, an agentic design could check whether required documents are present, retrieve policy clauses, ask another service for coverage context, draft a next-step recommendation, update a work queue, and escalate for approval when a threshold is crossed. What makes it agentic is not the presence of a model alone. It is the combination of planning, tool calling, retrieval, state tracking, and action across systems.

The trade-off is that every new capability expands the control surface. Hidden state can make behavior harder to audit. Broad tool permissions can turn a useful assistant into an uncontrolled operator. Brittle integrations can create partial actions that are hard to unwind. Recommendation: if an agent can act, it must have explicit scope, observable execution traces, policy checks before side effects, and a clean human fallback path.

Agentic AI is defined less by conversation and more by coordinated action under governance.

In Claims Processing, Start Narrow and Scale Only After Evaluation

A practical rollout sequence is more important than a sophisticated demo. Recommendation: begin with deterministic automation wherever decisions are repeatable and auditable. In claims, that usually means rules-based routing, document presence checks, SLA timers, and system updates that already have stable business logic. Then add predictive scoring where prioritization is useful, and generative assistance where document volume creates administrative drag. Agentic patterns should come later, after the workflow, permissions, and exception paths are already clear.

IBM's guidance is helpful here because it emphasizes governance frameworks, human oversight, testing expectations, and iterative review loops rather than autonomy for its own sake. Oracle makes the data point just as clearly from another angle: moving from process automation to agentic systems requires a comprehensive data strategy and a trusted, AI-ready pipeline across structured and unstructured data. In other words, agentic ambition without operational plumbing is usually architecture theater.

Evaluation should be concrete. Measure exception rates, approval latency, retrieval quality, model confidence bands, rework, and the percentage of cases that safely fall back to a human handler. Review not just model output but end-to-end workflow behavior. The question for leaders is simple: did this layer remove friction while preserving auditability, or did it move uncertainty deeper into the process?

The safest path is deterministic first, assistive AI second, agentic autonomy last and only under measurement.

Wrapping Up

Enterprise leaders do not need a longer list of AI buzzwords. They need a reliable way to match capability to workflow. Predictive AI is about probabilities and prioritization. Generative AI is about language and content. Agentic AI is about coordinated action across systems under policy, permission, and oversight. If that explanation is applied consistently, claims processing becomes a strong test case: automate the deterministic core, add predictive and generative layers where they remove friction, and introduce agents only when governance, observability, and escalation are designed into the architecture from the start.

Sources & References

- Beyond RPA Bots: What Happens When Automation Gets a Brain? — Oracle

- What is Agentic Engineering? — IBM

- Artificial intelligence (AI) | Definition, Examples, Types, Applications, Companies, & Facts — Britannica

- AI in Education: How Technology is Shaping the Future of Learning — Southern New Hampshire University

- AI Is Breaking Education. Rebecca Winthrop Has the Blueprint to Fix It. — [ Center for Humane Technology ] | Substack